A unit owner opens an email from management: “Chargeback for insurable damage to other units.”

They do what any calm, reasonable person would do. They paste the whole thing into an AI tool and type:

Write me a reply that shows I am not responsible.

Make it firm. Add legal authority.

Fifteen seconds later, AI delivers a masterpiece. It’s twelve paragraphs long, formatted like a factum, and confidently cites a dozen “leading cases,” including several supposedly from the Condominium Authority Tribunal on insurance deductible chargebacks.

Minor issue: the CAT does not have jurisdiction over insurance deductible chargebacks. Also, at least half the cases do not exist.

Now you have a unit owner sending management a polished legal brief that is wrong in a way that is both impressive and deeply unhelpful. The manager and board read it, see all those case names and file numbers, and suddenly worry they are about to be featured in a reported decision. So they reach out to their lawyer to confirm what’s real, what’s made up, and what actually matters.

And just like that, a straightforward chargeback issue turns into a hefty legal bill no one needed. It’s happening more often now that these tools are widely available (case in point, this CAT case Levon blogged about).

Why Everyone’s Suddenly Communicating Like a Robot

AI tools can draft emails, conduct research, or summarize documents incredibly quickly. That is the whole appeal. Instead of spending Saturday angrily rewriting a complaint about a neighbour’s midnight party, someone types:

“Write a firm but polite email asking the manager to enforce the noise disturbance rules.”

Instantly they have something polished, calm, and vaguely intimidating, avoiding the classic move of ending with an angrily typed ALL CAPS signoff: ‘AND ANOTHER THING…’

The Upside

Used properly, AI can help people communicate more clearly and take the heat out of disputes. It is fast, it can soften tone, and it can help people write more clearly. Sometimes that alone prevents a minor issue from becoming a full-blown dispute.

The Pitfalls

Used carelessly, it can escalate conflicts, cause disputes, and create “legal-sounding” nonsense that everyone then has to pay to untangle.

Tone problems: AI can come out overly formal, oddly casual, or unnecessarily aggressive.

Overkill: Not every issue needs a mini factum. A two-sentence request for clarification can become a five-page essay.

Legal mistakes: AI is not a lawyer. Condo disputes are fact- and document-specific. A bot can sound confident and still be wrong about what the declaration, rules, or the Act actually require.

Privacy risk: People paste in names, unit numbers, medical accommodation details, security incidents, and arrears information without thinking. That can create real governance and privacy issues, especially when sensitive information gets copied outside the condo’s usual systems.

Practical Guidelines

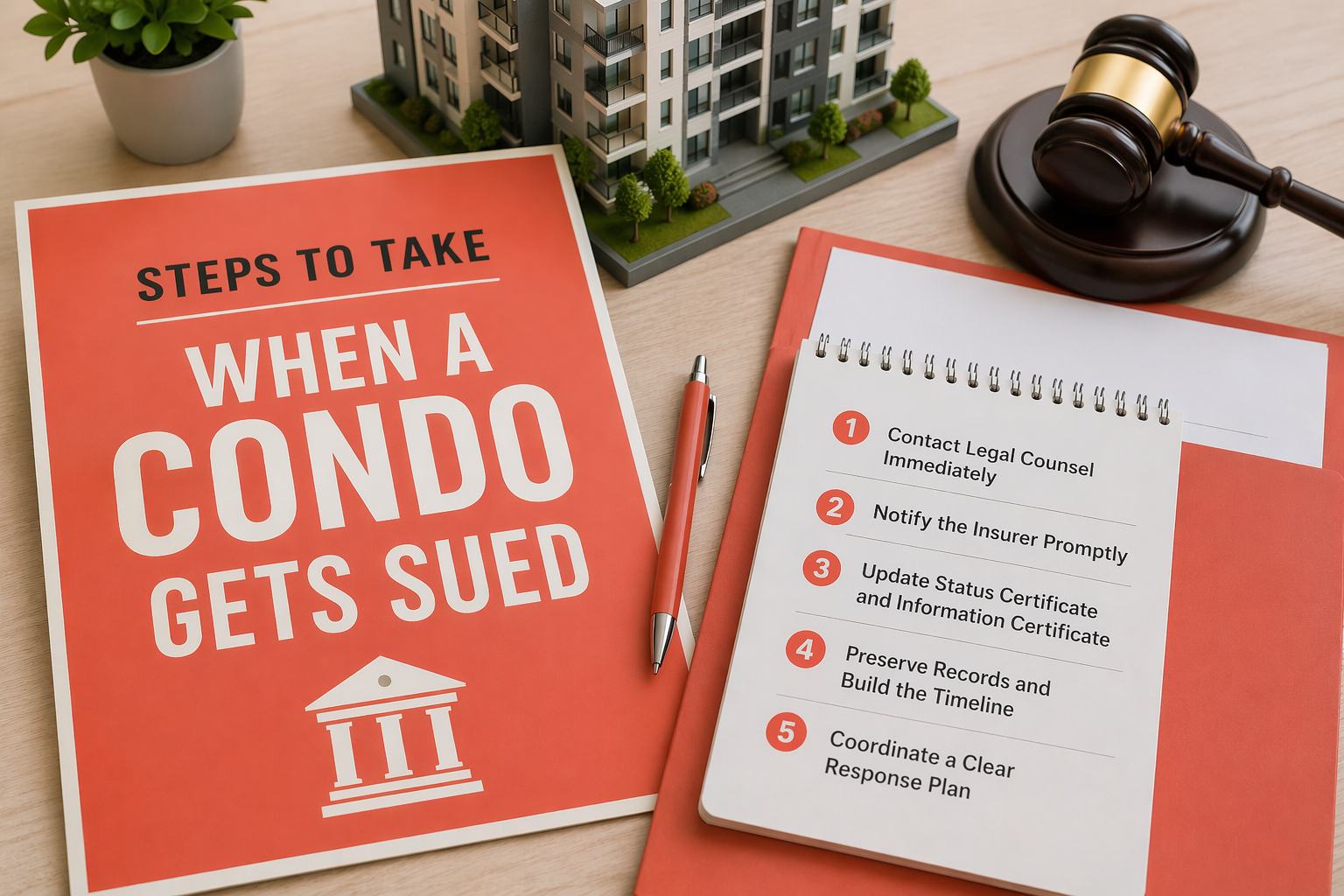

If you are going to use AI to draft condo communications:

- Use AI for structure and tone. Let it help you write, do not let it tell you what the law is. We’re not quite there (yet?).

- Do not paste sensitive information. Anonymize wherever possible.

- Fact-check the output against the condo’s actual documents and the specific facts before you hit send.

- Keep it short. Condo disputes do not improve with extra adjectives.

A Condo Lawyer’s Two Cents

AI can be a great tool in condo communications, especially for keeping things calm and readable. But it is not a substitute for judgment, and it is definitely not a substitute for legal advice.

Think of AI like a Roomba, the self-navigating robotic vacuum: useful, efficient, and impressive, but sometimes it confidently drives itself into a corner and spends twenty minutes bumping around before needing a human to rescue it.

So yes, you can use the tool. Just make sure you’re still the one running the building.